Cynefin, the sensemaking framework, is a very useful tool for helping teams and organizations approach problems more effectively. It acknowledges that different types of problems require different solution approaches. To celebrate the 21st anniversary of the framework, an experienced group of Cynefin practitioners wrote a book, Cynefin – Weaving Sense-Making into the Fabric of Our World, highlighting their experiences with the framework and its use. I was pleased to contribute a chapter.

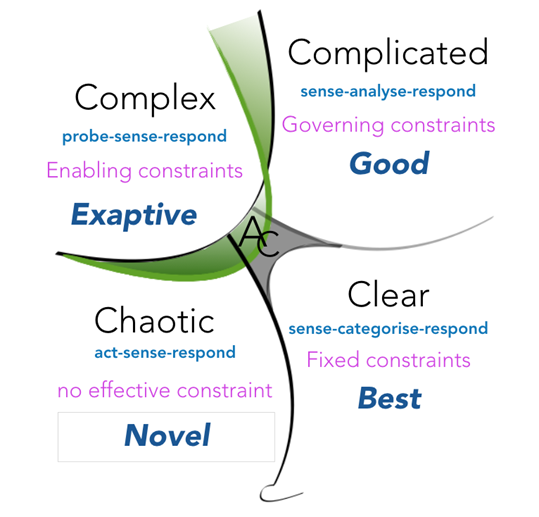

Cynefin posits five problem domains, and since the latest update to the framework, they all start with the letter “C”:

• Clear: Address the issue by following a protocol or recipe.

• Complicated: Bring in the right expertise.

• Complex: Use experimentation to learn more.

• Chaotic: Act swiftly to stabilize the system, then reassess.

• Confused: We cannot agree on the right approach.

You can read more about each of these here.

New Technology Creates Information Overload

My chapter in the book focuses on a historical problem without a clearly defined answer—the introduction of the U.S. Navy’s Combat Information Center (CIC) during World War II. The CIC was prompted by the need to make sense of unprecedented levels of information created by new technologies. Before the war, the Navy had invested in new technologies like radar, ultra-high frequency radios, and sonar. All these technologies created new and valuable information, but the Navy’s shipboard organizations found it very difficult to put that information to good use.

The problem wasn’t the new technologies or the quality of information they provided. The problem was that all the new information overwhelmed the people trying to keep track of it. Ship captains had been accustomed to receiving incoming reports, analyzing the data in them, then determining what to do. Now, reports came in too quickly. Fragments of valuable information arrived faster than ship captains could analyze them. They lost track of things and made mistakes. It was clear that a better approach was needed, but there was uncertainty—and disagreement—about how to approach it.

A Complex Problem

That disagreement suggested it was a complex problem, because when experts disagree the relationship between cause and effect is uncertain. Cynefin advises that complex problems require multiple parallel experiments to better understand available alternatives and explore possibilities. That is exactly how the Navy approached it. The commander of the Pacific Fleet, Admiral Chester W. Nimitz, defined a goal. Each ship would create a CIC. Nimitz explained that the CIC would take in available information, make sense of it, and provide it to the ship’s captain and weapons systems in an actionable format. But Nimitz deliberately refrained from telling his ships how to organize a CIC.

Safe to Fail Experiments

Nimitz’s instructions set off a series of parallel, safe-to-fail experiments. Each ship worked to create a CIC. Different ships approached it in different ways, and those differences created rich and valuable feedback about which patterns worked and which didn’t. As Nimitz and his colleagues developed a better understanding of those patterns, they provided more detailed guidance about how to organize and operate a CIC. A virtuous cycle of learning and continuous improvement emerged. While I’ve summarized the situation here, the chapter outlines more details about the learning dynamics and challenges it overcame if you are interested in learning more.

Lessons Learned from History

I value this story because it illustrates how concepts from Cynefin have been shown to effective in the real world. It also highlights the limitations of technology. New technologies can be disruptive and to fully take advantage of their potential, we often need to explore alternatives. That’s what the Navy had to do with radar and it’s what many organizations are experiencing today with AI, Cloud technologies, Agile, and DevSecOps. Just adopting the technology isn’t enough. Organizational structures and behavioral patterns must change. Often, the best way to identify the necessary changes is to use parallel experimentation, as Cynefin recommends for complex problems. It frequently takes an outside expert to know the patterns that work and apply them effectively.